Arash Abadpour, “Rederivation of the Fuzzy-Possibilistic Clustering Objective Function through Bayesian Inference”, Fuzzy Sets and Systems, Volume 305, 15 December 2016, Pages 29–53 (url)(pdf) (code). [Connie]

Unsupervised clustering of a set of datums into homogenous groups is a primitive operation required in many signal and image processing applications. In fact, different incarnations and hybrids of Fuzzy C–Means (FCM) and Possibilistic C–means (PCM) have been suggested which address additional requirements such as accepting weighted sets and being robust to the presence of outliers. Nevertheless, arriving at a general framework, which is independent of the datum model and the notion of homogeneity of a particular problem class, is a challenge. However, this process has not been followed organically and clustering algorithms are generally based on exogenous objective functions which are heuristically engineered and are believed to lead to the satisfaction of a required behavior. These techniques also commonly depend on regularization coefficients which are to be set “prudently” by the user or through separate processes. In contrast, in this work, we utilize Bayesian inference and derive a robustified objective function for a fuzzy–possibilistic clustering algorithm by assuming a generic datum model and a generic notion of cluster homogeneity. We utilize this model for the purpose of cluster validity assessment as well. We emphasize the epistemological importance of the theoretical basis on which the developed methodology rests. At the end of this paper, we present experimental results to exhibit the utilization of the developed framework in the context of four different problem classes.

Arash Abadpour, “Incorporating spatial context into fuzzy-possibilistic clustering using Bayesian inference”, Journal of Intelligent and Fuzzy Systems, Volume 30, No. 2, February 2016, Pages 895-919 (pdf) (url). [Calista]

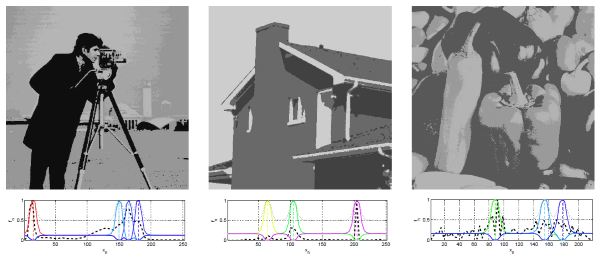

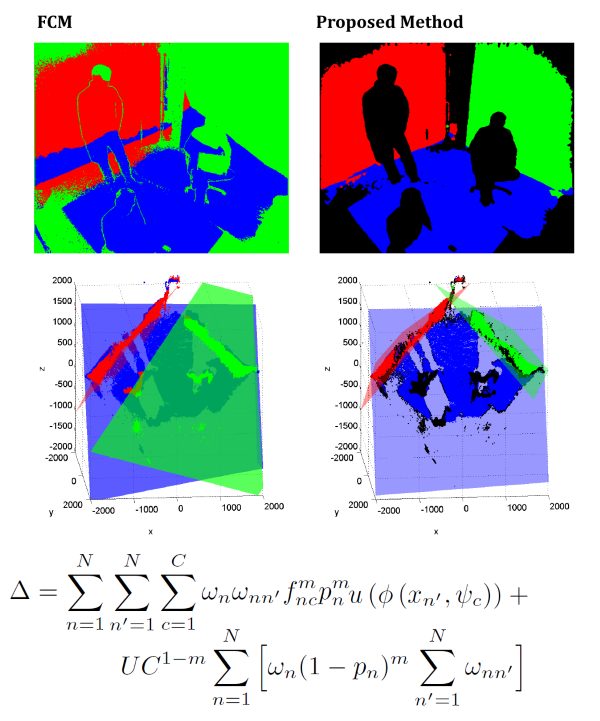

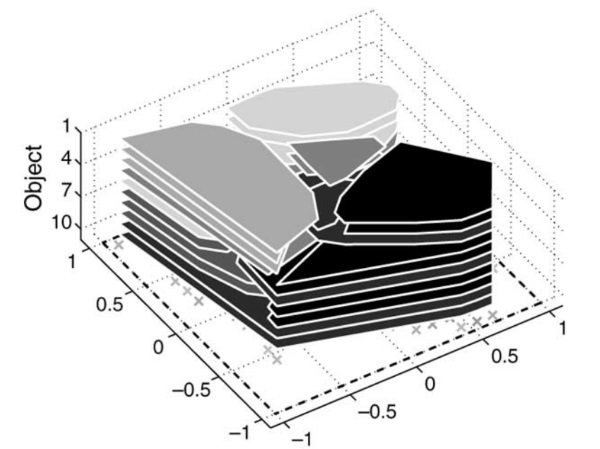

Data clustering is the generic process of splitting a set of datums into a number of homogenous sets. Nevertheless, although a clustering process inputs datums as a set of separate mathematical objects, these entities are in fact correlated within a spatial context specific to the problem class in hand. For example, when the data acquisition process yields a 2D matrix of regularly sampled measurements, as it is the case with image sensors which utilize different modalities, adjacent datums are highly correlated. Hence, the clustering process must take into consideration the spatial context of the datums. A review of the literature, however, reveals that a significant majority of the well-established clustering techniques in the literature ignore spatial context. Other approaches, which do consider spatial context, however, either utilize pre- or post-processing operations or engineer into the cost function one or more regularization terms which reward spatial contiguity. We argue that employing cost functions and constraints based on heuristics and intuition is a hazardous approach from an epistemological perspective. This is in addition to the other shortcomings of those approaches. Instead, in this paper, we apply Bayesian inference on the clustering problem and construct a mathematical model for data clustering which is aware of the spatial context of the datums. This model utilizes a robust loss function and is independent of the notion of homogeneity relevant to any particular problem class. We then provide a solution strategy and assess experimental results generated by the proposed method in comparison with the literature and from the perspective of computational complexity and spatial contiguity.

Arash Abadpour, “A Sequential Bayesian Alternative to the Classical Parallel Fuzzy Clustering Model”, Information Sciences, Volume 318, October 2015, Pages 28-47 (url) (pdf) (full text) (printed copy). [Belinda]

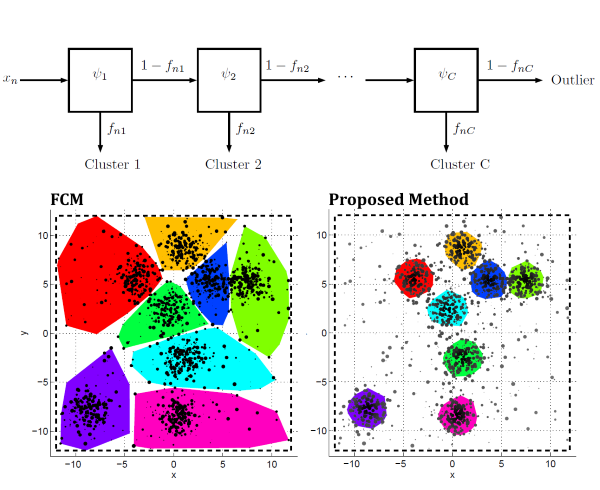

Unsupervised separation of a group of datums of a particular type, into clusters which are homogenous within a problem class-specific context, is a classical research problem which is still actively visited. Since the 1960’s, the research community has converged into a class of clustering algorithms, which utilizes concepts such as fuzzy/probabilistic membership as well as possibilistic and credibilistic degrees. In spite of the differences in the formalizations and approaches to loss assessment in different algorithms, a significant majority of the works in the literature utilize the sum of datum-to-cluster distances for all datums and all clusters. In essence, this double summation is the basis on which additional features such as outlier rejection and robustification are built. In this work, we revisit this classical concept and suggest an alternative clustering model in which clusters function on datums sequentially. We exhibit that the notion of being an outlier emerges within the mathematical model developed in this paper. Then, we provide a generic loss model in the new framework. In fact, this model is independent of any particular datum or cluster models and utilizes a robust loss function. An important aspect of this work is that the modeling is entirely based on a Bayesian inference framework and that we avoid any notion of engineering terms based on heuristics or intuitions. We then develop a solution strategy which functions within an Alternating Optimization pipeline.

Arash Abadpour, “On applications of pyramid doubly joint bilateral filtering in dense disparity propagation”, 3D Research, Volume 5, Number 2, April 2014, Pages 1-20 (url) (pdf). [Margo]

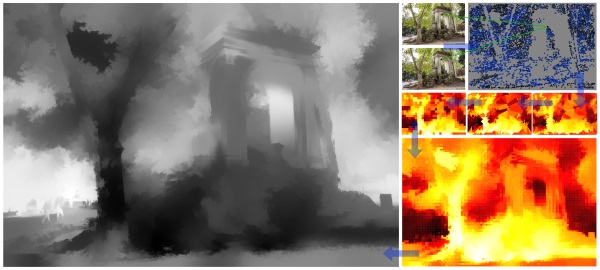

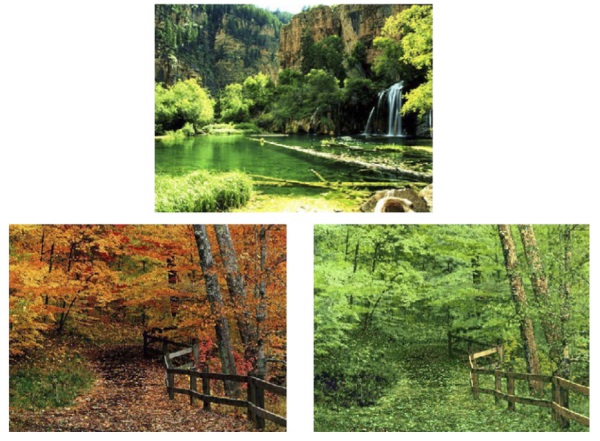

Stereopsis is the basis for numerous tasks in machine vision, robotics, and 3D data acquisition and processing. In order for the subsequent algorithms to function properly, it is important that an affordable method exists that, given a pair of images taken by two cameras, can produce a representation of disparity or depth. This topic has been an active research field since the early days of work on image processing problems and rich literature is available on the topic. Joint bilateral filters have been recently proposed as a more affordable alternative to anisotropic diffusion. This class of image operators utilizes correlation in multiple modalities for purposes such as interpolation and upscaling. In this work, we develop the application of bilateral filtering for converting a large set of sparse disparity measurements into a dense disparity map. This paper develops novel methods for utilizing bilateral filters in joint, pyramid, and doubly joint settings, for purposes including missing value estimation and upscaling. We utilize images of natural and man-made scenes in order to exhibit the possibilities offered through the use of pyramid doubly joint bilateral filtering for stereopsis.

Arash Abadpour, Attahiru Sule Alfa, and Anthony C.K. Soong, “Approximation Algorithms For Maximizing The Information-Theoretic Sum Capacity of Reverse Link CDMA Systems”, AEU – International Journal of Electronics and Communications, Volume 63, Issue 2, 4 February 2009, Pages 108-115. (pdf) (html)

Since the introduction of multimedia services to CDMA systems, many researchers have been working on the maximization of the aggregate capacity of the reverse link. This problem looks for the optimal set of transmission powers of the stations subject to a set of constraints. One of the research directions in this field is to devise a practically realistic set of constraints and then to propose an algorithm for solving the resulting problem. Through a unified approach, introduced recently by the authors, a more general investigation of the problem, equipped with a wide range of constraints, is possible. Here, we go further and propose an approximation to reshape the objective function into a more conveniently workable one. Then, we analyze the three available formulations of the problem and show that integrating this approximation into the available algorithms has the benefit of reducing the computational cost. The paper includes the mathematics involved in the approximation and its integration into the algorithms. Also, we analyze examples to demonstrate the achievements of the proposed method.

Arash Abadpour and S. Kasaei. “A Novel Color Image Compression Method using Eigenimages”, Journal of Iranian Association of Electrical and Electronics Engineers (IAEEE), Vol. 5, No. 2, Fall and Winter 2008. (pdf, url).

Since the birth of multi–spectral imaging techniques, there has been a tendency to consider and process this new type of data as a set of parallel gray–scale images, instead of an ensemble of an $n$–D realization. Although, even now, some researchers make the same assumption, it is proved that using vector geometries leads

to better results. In this paper, first a method is proposed to extract the eigenimages from a color image. Then, using the energy compaction of the proposed method, a new color image compression method is proposed and analyzed. The proposed compression method, which uses vector–based operations, applies a grayscale compression algorithm on the eigenimages. Experimental results show that, at the same bandwidth, the proposed method produce $3.6$dB and $1.9$dB enhancement in the quality, compared to JPEG and JPEG2000, respectively.

Arash Abadpour and S. Kasaei, “Principal Color and Its Application to Color Image Segmentation”, Scientia Iranica, Volume 15, No. 2, April 2008, Pages 238-245 (pdf) (html) (web).

Color image segmentation is a primitive operation n many image processing and computer vision applications. Accordingly, there are numerous segmentation approaches in the literature, something which can be misleading for a researcher who is looking for a practical algorithm. While many researchers are still using the tools which belong to the old color space paradigm, there are evidences in the research established in eighties that proper descriptor of color vectors should act locally in the color domain. In this paper, we use these results to propose a new color image segmentation method. The proposed method searches for the principal colors, defined as the intersections of the cylindrical representations of homogeneous blocks of the given image. As such, rather than using the noisy individual pixels, which may contain many outliers, the proposed method uses the linear representation of homogeneous blocks of the image. The paper includes comprehensive mathematical discussion of the proposed method and experimental results to show the efficiency of the proposed algorithm.

Arash Abadpour and Attahiru Sule Alfa, “Approximate Algorithms for Maximizing the Capacity of the Reverse Link in Multiple-Class CDMA System”, in Operations Research and Cyber-Infrastructure, M. J. Saltzman, J. W. Chinneck, and B. Kristjansson, Editors, Springer, 2008, Pages: 237-252. (pdf) (html)

Code Division Multiple Access (CDMA) has proved to be an efficient and stable means of communication between a group of users which share the same physical medium. Therefore, with the rising demands for high-bandwidth multimedia services on mobile stations, it has become necessary to devise methods for more rigorous management of capacity in these systems. While a major method for regulating capacity in CDMA systems is through power control, the mathematical complexity of the regarding model inhibits useful generalizations. In this paper, a linear and a quadratic approximation for the aggregate capacity of the reverse link in a CDMA system are proposed. It is shown that the error induced by the approximations is reasonably low and that rewriting the optimization problem based on these approximations makes the implementation of the system in a multiple-class scenario feasible. This issue has been outside the scope of the available methods which work on producing an exact solution to a single-class problem.

Arash Abadpour and S. Kasaei. “Color PCA Eigenimages and Their Application to Compression and Watermarking”, IEE Image & Vision Computing, Volume 26, Issue 7, July 2008, Pages 878–890 (pdf) (html).

From the birth of multi–spectral imaging techniques, there has been a tendency to consider and process this new type of data as a set of parallel gray-scale images, instead of an ensemble of an n-D realization. However, it has been proved that using vector-based tools leads to a more appropriate understanding of color images and thus more efficient algorithms for processing them. Such tools are able to take into consideration the high correlation of the color components and thus to successfully carry out energy compaction. In this paper, a novel method is proposed to utilize the principal component analysis in the neighborhoods of an image in order to extract the corresponding eigenimages. These eigenimages exhibit high levels of energy compaction and thus are appropriate for such operations as compression and watermarking. Subsequently, two such methods are proposed in this paper and their comparison with available approaches is presented.

Arash Abadpour, Attahiru Sule Alfa, and Anthony C.K. Soong, “Closed Form Solution for Maximizing the Sum Capacity of Reverse-Link CDMA System with Rate Constraints”, IEEE Transactions on Wireless Communications, Volume 7, Issue 4, April 2008, Page(s):1179 – 1183 (pdf) (html).

The information-theoretic maximum system capacity in a CDMA network, which guarantees fairness to mobile stations, can be achieved by optimally allocating transmit powers of the mobile stations while imposing individual QoS constraints. Even though this optimization is carried out in a multi-dimensional space we show that the optimal solution can be reduced to a search in a one-dimensional space. This considerably reduces the computational effort required. In the current paper we first show that the previous results can be obtained by a simpler and more intuitive approach. In addition we show that the candidate solutions can be determined in a closed form. Then we analyze the solution to the problem and the effects of different parameters.

Arash Abadpour, Attahiru Sule Alfa, and Jeff Diamond, “Video-on-Demand Network Design And Maintenance Using Fuzzy Optimization”, IEEE Transactions on Systems, Man, and Cybernetics, Part B, April 2008, Volume 38, Issue 2, Page:404-420 (pdf) (html).

Video-on-Demand (VoD) is the entertainment source which, in the future, will likely overtake regular television in many aspects. Even though many companies have deployed working VoD services, some aspects of the VoD should still undergo further improvement, in order for it to reach to the foreseen potentials. An important aspect of a VoD system is the underlying network in which it operates. According to the huge number of customers in this network, it should be carefully designed to fulfill certain performance criteria. This process should be capable of finding optimal locations for the nodes of the network as well as determining the content which should be cached in each one. While, this problem is categorized in the general group of network optimization problems, its specific characteristics demand a new solution to be sought for it. In this paper, inspired by the successful use of fuzzy optimization in similar problems in other fields, a fuzzy objective function is derived which is heuristically shown to minimize the communication cost in a VoD network, while also controlling the storage cost. Then, an iterative algorithm is proposed to find a locally optimal solution to the proposed objective function. Capitalizing on the unrepeatable tendency of the proposed algorithm, a heuristic method for picking a good solution, from a bundle of solutions produced by the proposed algorithm, is also suggested. This paper includes formal statement of the problem and its mathematical analysis. Also, different scenarios in which the proposed algorithm can be utilized are discussed.

Arash Abadpour and S. Kasaei. “An Efficient PCA-based Color Transfer”, Visual Communication & Image Representation, February 2007, Volume 18, Number 1, Pages, 15-34(pdf)

Color information of natural images can be considered as a highly correlated vector space. Many different color spaces have been proposed in the literature with different motivations toward modeling and analysis of this stochastic field. Recently, color transfer among different images has been under investigation. Color transferring consists of two major categories: colorizing grayscale images and recoloring colored images. The literature contains a few color transfer methods that rely on some standard color spaces. In this paper, taking advantages of the principal component analysis (PCA), we propose a unifying framework for both mentioned problems. The experimental results show the efficiency of the proposed method. The performance comparison of the proposed method is also given.

Arash Abadpour, S. Kasaei, S. Mohsen Amiri, “Fast Registration of Remotely-Sensed Images for Earthquake Damage Estimation”, EURASIP Journal on Applied Signal Processing, Volume 2006 (2006), Article ID 76462. (pdf) (web)

Analysis of the multispectral remotely sensed images of the areas destroyed by an earthquake is proved to be a helpful tool for destruction assessments. The performance of such methods is highly dependant on the preprocess that registers the two shots before and after an event. In this paper, we propose a new fast and reliable change detection method for remotely sensed images and analyze its performance. The experimental results show the efficiency of the proposed algorithm.

Arash Abadpour and S. Kasaei. “Unsupervised, Fast and Efficient Color Image Copy Protection”, IEE Proceedings Communications, October 2005, Volume 152, Issue 5, Pages 605-616. (pdf)

The ubiquity of broadband digital communications and mass storage in modern society has stimulated the widespread acceptance of digital media. However, easy access to royalty-free digital media has also resulted in a reduced perception in society of the intellectual value of digital media and has promoted unauthorised duplication practices. To detect and discourage the unauthorised duplication of media, researchers have investigated watermarking methods to embed ownership data into media. However, some authorities have expressed doubt over the efficacy of watermarking methods to protect digital media. The paper introduces a novel method to discourage unauthorised duplication of digital images by introducing reversible, deliberate distortions to the original image. The resultant image preserves the image size and essential content with distortions in edge and colour appearance. The proposal method also provides an efficient reconstruction process using a compact key to reconstruct the original image from the distorted image. Experimental results indicate that the proposed method can achieve effective robustness towards attacks, while its computational cost and quality of results are completely practical.

Arash Abadpour and S. Kasaei. “Pixel-based Skin Detection for Pornography Filtering”, Iranian Journal of Electrical and Electronic Engineering (IJEEE), July 2005, Volume 1, No. 3, Pages 21-41. (pdf)

A robust skin detector is the primary need of many fields of computer vision, including face detection, gesture recognition, and pornography filtering. Less than 10 years ago, the first paper on automatic pornography filtering was published. Since then, different researchers claim different color spaces to be the best choice for skin detection in pornography filtering. Unfortunately, no comprehensive work is performed on evaluating different color spaces and their performance for detecting naked people. In this way, researchers refer to the results ion skin detection for face detection, which underlies different imaging conditions. In this paper, we examine 21 color spaces in all their possible representations for pixel-based skin detection in pornographic images. In this way, this paper holds the largest investigation in the field of skin detection, and only one run on the pornographic images.